TechnologyJuly 15, 2021

Data optimization at the edge with local data processing

The industrial world is being driven to more and more “close integration” from sensors to the cloud. Industries need to start connecting their machineries and production to collective networks so that they can achieve e.g., Industrie 4.0 compliant, data driven production.

The first step with centralized data collection was to get data into the central database where it could be processed and analyzed. In most of the cases, this was done by streaming all possible data to databases. This meant that gigabytes to terabytes of data were pushed over the networks into data centers or clouds.

Processing data locally

Companies are now starting to realize that simply streaming all data out and then back in isn’t necessarily the most functional way to do it. Data needs to be first processed close to the machines so that short-term, production relevant data can be quickly looped back to the machine’s PLC and long-term data can be preprocessed and sent to central databases to be analyzed and archived.

Processing data close to the production, while having a fast and reliable connection to the machine’s brains, requires a platform and a concept of how and where the data could be processed efficiently.

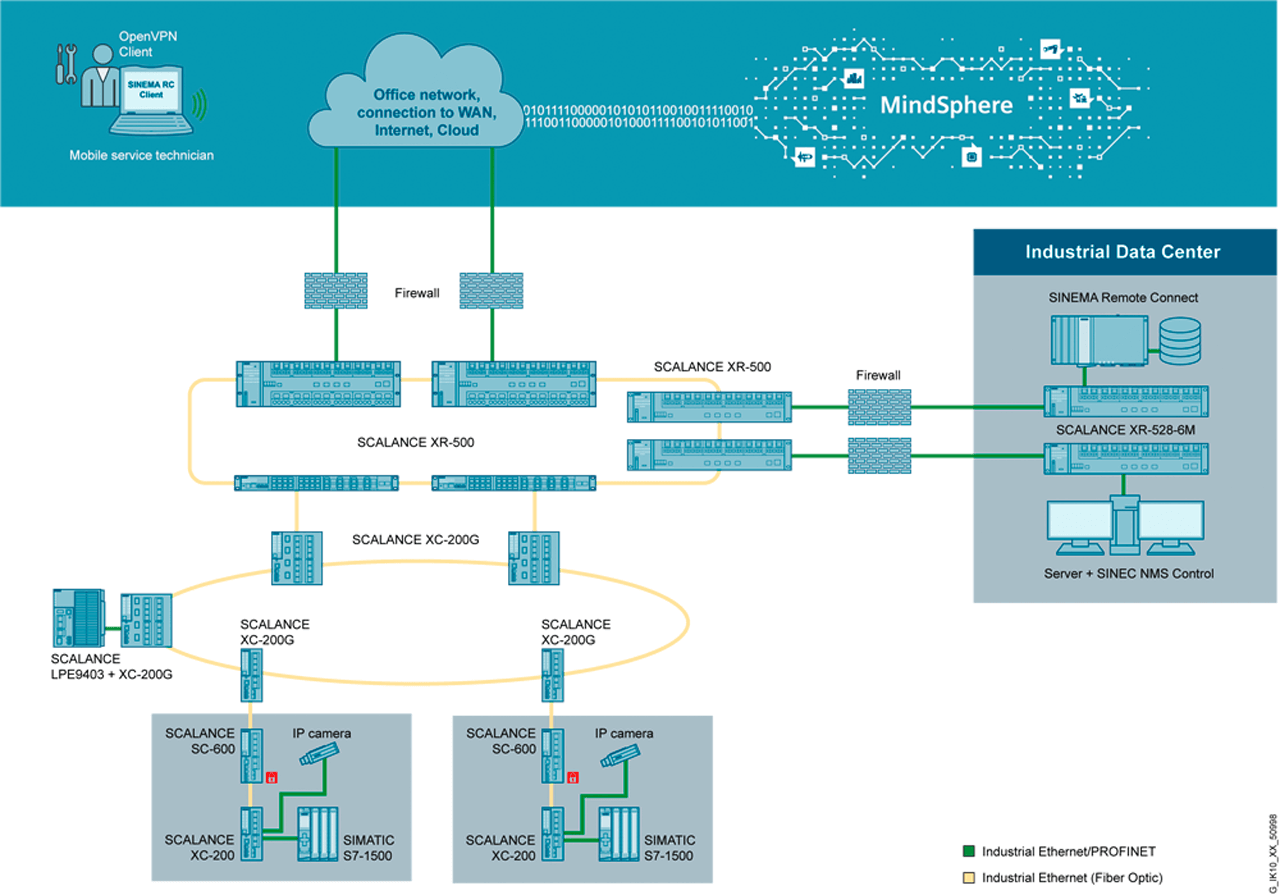

Local Processing Engine in the shopfloor.

Body and soul for production

Hardware-wise this platform should have the same “body” and characteristics as the production machine itself – meaning temperature endurance, maintenance free, industrial grade components that have the same life cycle as the machine.

ARM processor architecture with long life memory chip is a good solution for this task, due to its fast and reliable operation and low power usage. This architecture is being used in industrial network components and PLCs.

In addition to reliable hardware, the local processing engine needs to have “a soul”, an operating system that can grow and evolve according to needs. This is most obviously a place for a Linux environment. By selecting the “mother” of all Linux distributions, “Debian” fills this place.

This platform needs to be able to analyze and process data, run security applications (IAD, IDS, firewalls), be open for a customized solution, utilize containerized applications and establish a secure connection to different platforms and ecosystems.

Faster and flexible commissioning

Spicing up the operating system with a fast and simple distribution of Edge application, Docker© platform offers a good and widely used solution.

Now, just by writing “apt-get” <application name>, “pull” <container name> in the command line or a couple of mouse clicks in containers or in the Industrial Edge central management platform, applications can now be quickly and easily commissioned in all local processing platforms.

This allows for a faster and more flexible way to do commissioning for various applications and gives customers a breeding ground for their data processing projects.

Multitasker for the shop floor

There are production plants and machines that are connected to factory networks as segmented network cells. Communication between the cell and the SCADA system has been already setup and it is currently working.

The user has to decide for his production plant in a data mining application which data must be collected and how often. The SCADA system already collects data from the machines and plants, but this is only for operation and monitoring restricted data. Adding new data points and forwarding them to upper-level systems takes a lot of effort and may lead to an upgrade of the SCADA system. This is typically not wanted.

Placing a processing engine that can gather and process all kind of data, flexibly inside or close to the cells sounds like a good option. Creating a cell dedicated small application that talks with the machine transparently without any effect on existing communications would be the solution.

The next step is to establish who will create this application and where it will be hosted. The decision has been made that IT takes responsibility of developing the software, while automation takes responsibility of maintaining the hardware on the shop floor. An ideal hardware solution is a processing engine that allows open-source applications and container-based architecture.

While the data mining project is running, it is clear that an additional application could be integrated to improve maintenance of the production machines in the factory. These enhancements would change production from reactive troubleshooting to proactive and preventative maintenance. The company decides to pursue these changes in order to reap these benefits.

Automation wants to have applications that are simple to use and capable of providing them with relevant information. Searching for applications from different sources reveals that there are several possibilities to implement existing applications from open sources. A deep dive into the analysis identified that both the data mining and troubleshooting applications should be hosted on the same hardware due to the same requirement for data source connectivity and maintenance. As a solution, they will need a multitasker platform for the shop floor.

The perfect match for the application is found from Siemens generated, industrial use application specially for process network analyzing. This application is also developed to run as container on an ARM based device called Scalance LPE9403, which is a local processing engine that has the power to run multiple applications simultaneously as containers.

By locating this device at the cell or aggregation level in the automation network, they can concentrate on data gathering and analyzers in one hardware platform without compromising applications availability.

Integrating Edge computing into the shop floor with a robust and flexible platform can optimize machine and plant availability and performance, improve data-driven decision making and enable machine builders to develop new business models.